Cloud Modernization Strategies: Transforming Legacy Applications into Scalable Digital Powerhouses

Cloud modernization strategies are essential in today’s hyper-digital economy, where legacy applications are no longer just outdated. They are barriers to innovation, agility, and growth. Organizations that continue to rely on aging systems struggle with scalability, high maintenance costs, and limited integration capabilities, making modernizing legacy systems to cloud a critical priority.

Cloud modernization is the strategic answer. It’s not just about moving applications to the cloud. It is about reimagining them as agile, scalable, and future-ready digital assets that drive business transformation.

At Prolifics, we see cloud modernization as a powerful enabler that helps enterprises unlock innovation, reduce technical debt, and accelerate digital transformation journeys.

What is Cloud Modernization?

Cloud modernization refers to transforming legacy applications, infrastructure, and data ecosystems into cloud-native or cloud-optimized environments. Unlike simple migration, modernization involves re-architecting, refactoring, or rebuilding applications to fully leverage cloud capabilities.

It is important to understand cloud migration vs modernization, as modernization focuses on long-term scalability and innovation rather than just infrastructure relocation.

This transformation is designed to enhance:

• Scalability

• Performance

• Cost efficiency

• Business agility

Organizations that modernize effectively gain a competitive edge by enabling faster innovation cycles and improved user experiences.

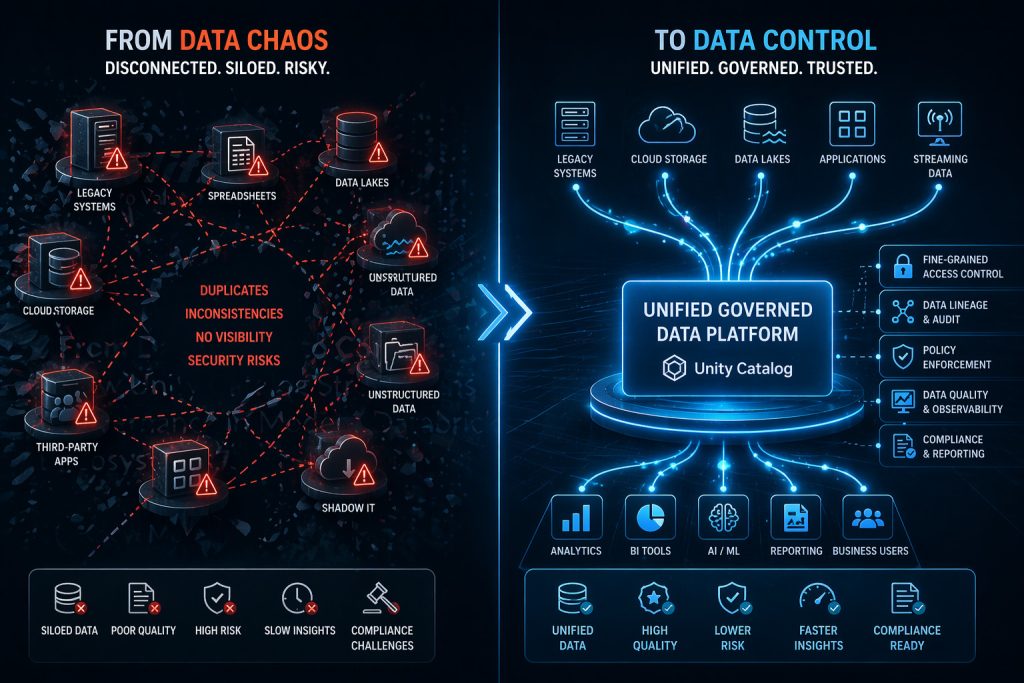

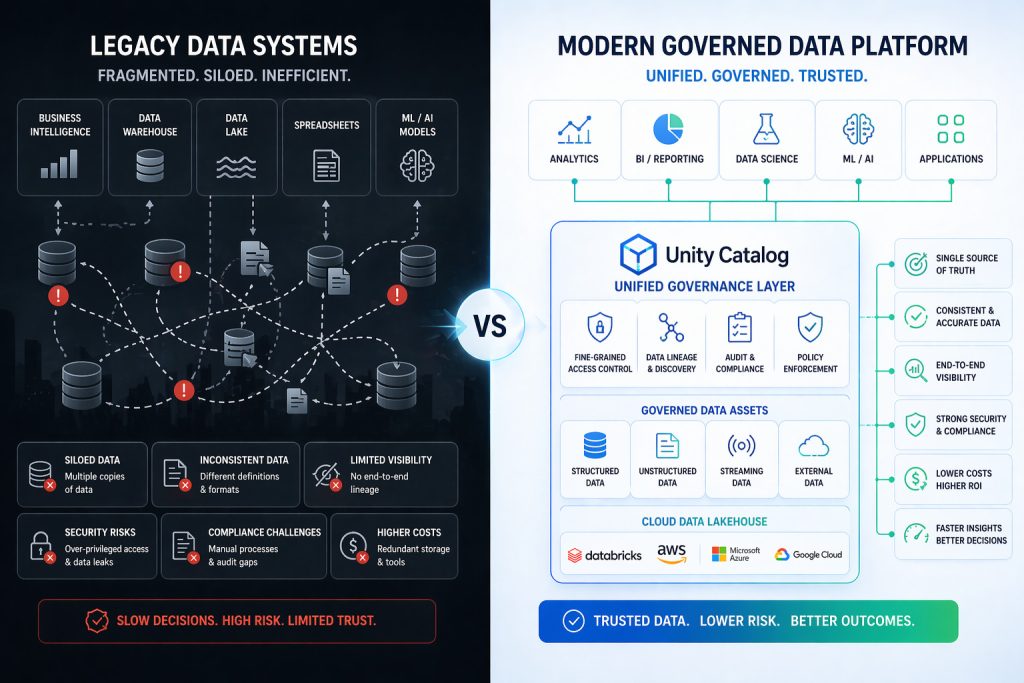

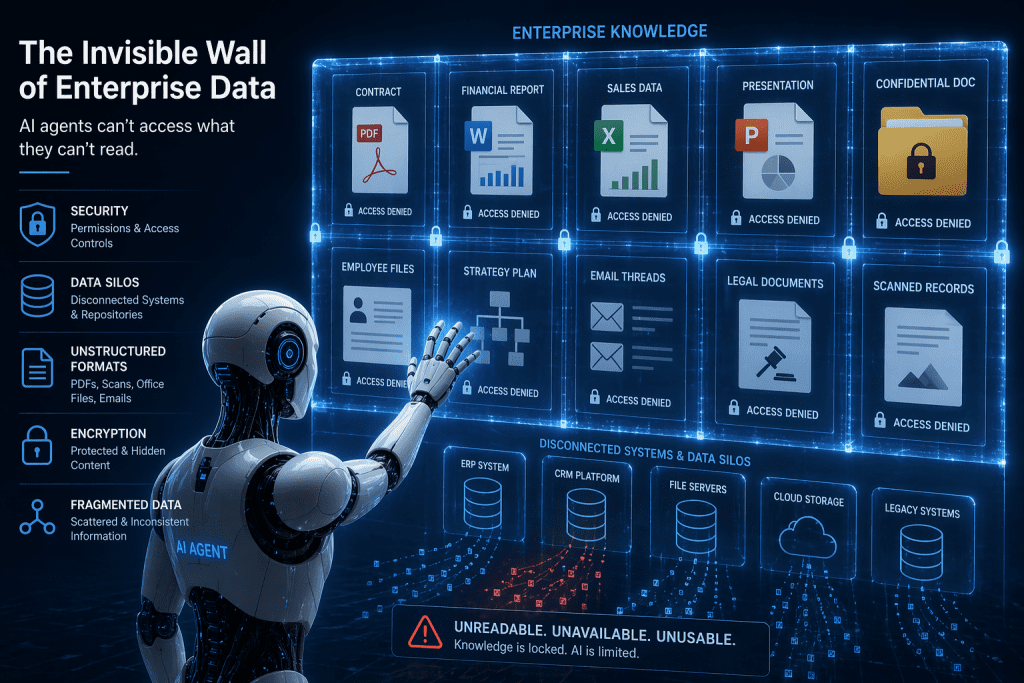

Why Legacy Systems Are Holding You Back

Legacy systems were built for a different era that prioritized stability over speed. Today, that trade-off no longer works.

Key challenges include:

• Limited scalability for growing workloads

• High operational costs due to outdated infrastructure

• Integration challenges with modern APIs and digital platforms

• Slow time-to-market for new features

As industry insights highlight, outdated systems hinder agility and make it difficult to execute modern digital strategies effectively.

In a world where AI, real-time analytics, and digital experiences define success, legacy systems simply cannot keep up.

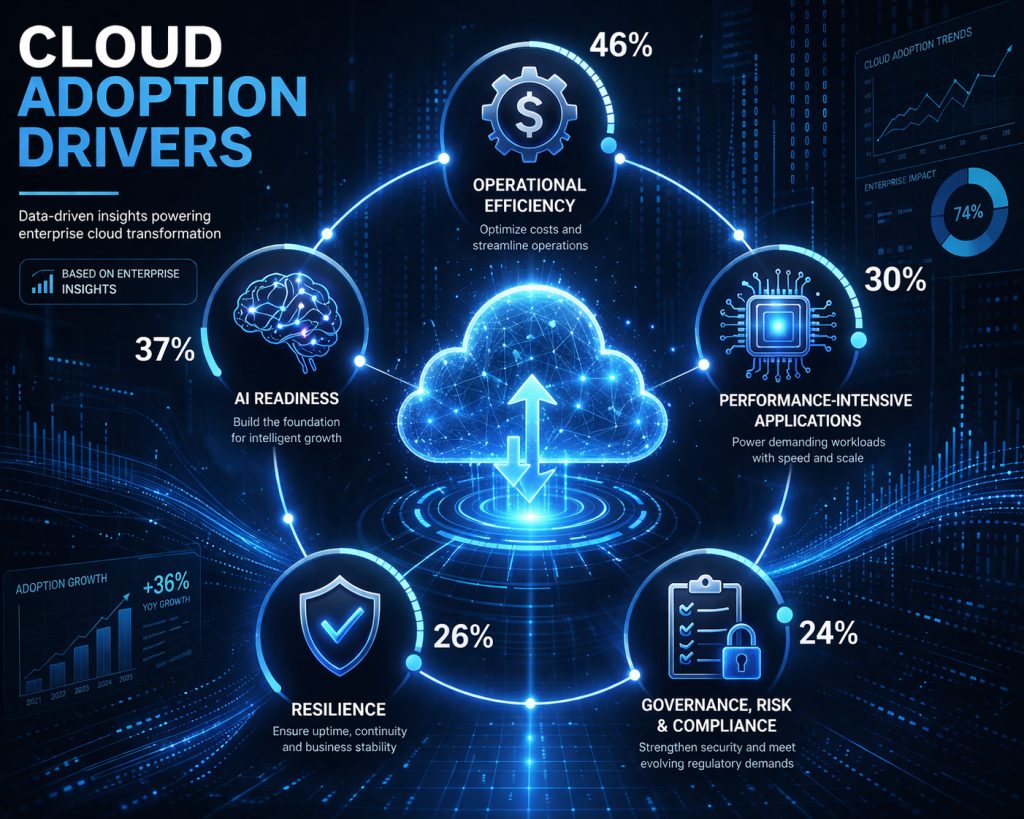

The Business Value of Cloud Modernization

Cloud modernization is not just a technical upgrade. It is a strategic investment in growth.

- Enhanced Scalability and Flexibility

Cloud-native architectures allow businesses to scale resources dynamically based on demand. This ensures optimal performance without over-provisioning. - Cost Optimization

Modernization reduces infrastructure and maintenance costs while improving resource utilization. Organizations often achieve significant savings through automation and optimized cloud usage. (Hexaware Technologies) - Faster Innovation

Modern platforms enable rapid development, testing, and deployment. This empowers teams to deliver new features faster. - Improved Resilience and Security

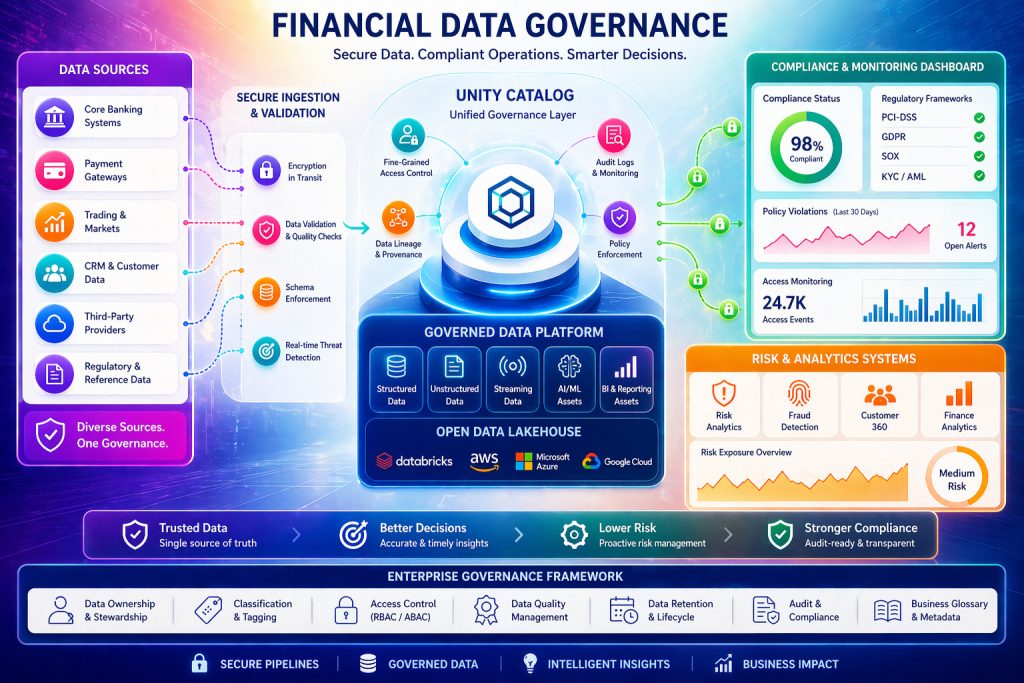

Cloud environments offer built-in redundancy, disaster recovery, and advanced security capabilities. - Data-Driven Decision Making

With modernized systems, businesses gain real-time insights and analytics capabilities. This enables smarter decisions.

Key Cloud Modernization Strategies

Transforming legacy applications requires a structured approach. Leading organizations adopt a mix of strategies based on application complexity, business value, and technical feasibility.

- Rehosting (Lift and Shift)

This involves moving applications to the cloud with minimal changes. It is a quick way to reduce infrastructure costs but may not fully leverage cloud benefits. - Replatforming

Applications are optimized for the cloud without significant architectural changes. This improves performance and scalability while maintaining core functionality. - Refactoring (Re-architecting)

Applications are redesigned using microservices, containers, and APIs. This approach unlocks maximum cloud potential and supports innovation. - Rebuilding

Applications are rewritten from scratch using modern frameworks and technologies. This ensures long-term scalability and flexibility. - Replacing (SaaS Adoption)

Legacy systems are replaced with cloud-based SaaS solutions. This reduces maintenance overhead and accelerates deployment.

Most enterprises adopt a hybrid approach, combining multiple strategies to achieve optimal results.

Overcoming Cloud Modernization Challenges

While the benefits are compelling, cloud modernization comes with challenges that require careful planning:

• Complex legacy architectures

• Integration with existing systems

• Data migration risks

• Cost management issues

• Security and compliance requirements

Without a well-defined strategy, organizations risk delays, cost overruns, and incomplete transformation.

How to Address These Challenges

• Conduct a comprehensive cloud readiness assessment

• Prioritize applications based on business impact

• Use automation tools to accelerate migration

• Implement a Cloud Center of Excellence (CoE)

• Ensure security is integrated from the start

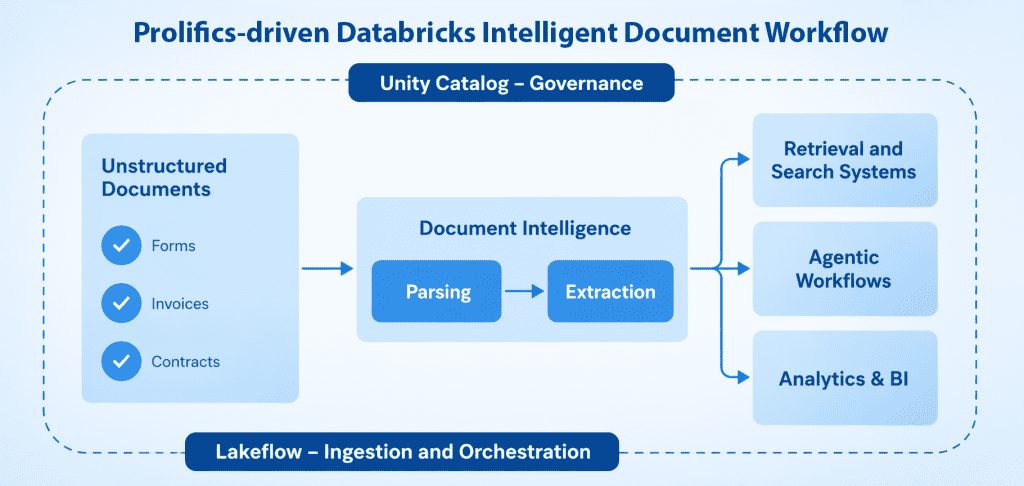

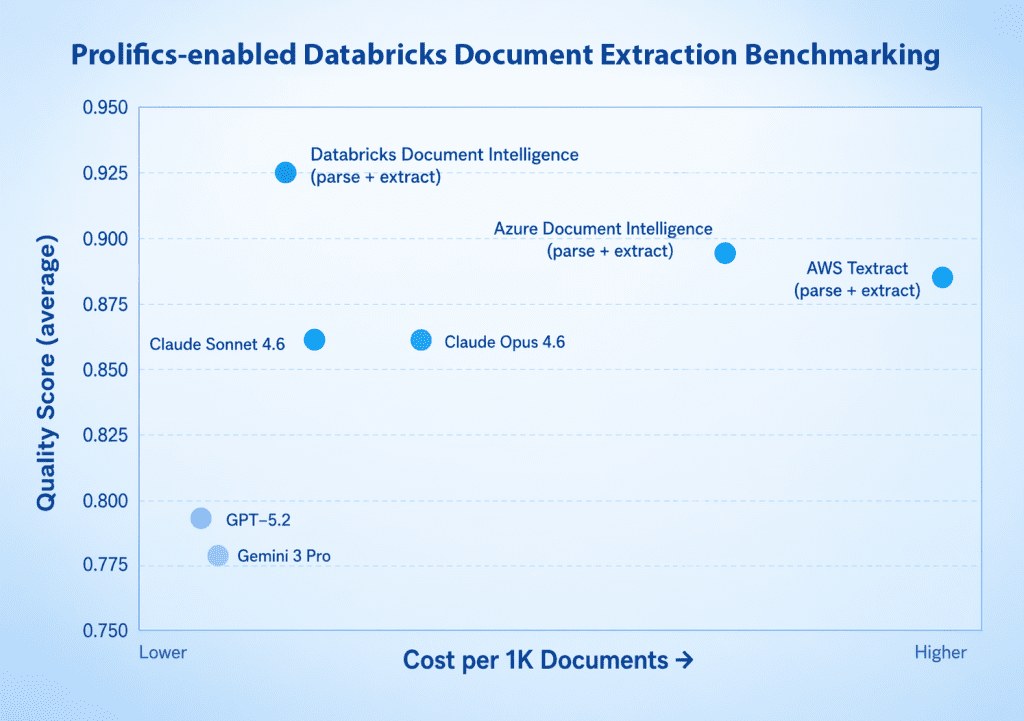

The Prolifics Approach to Cloud Modernization

At Prolifics, we go beyond migration. We deliver end-to-end cloud transformation that aligns technology with business outcomes.

- Strategic Assessment & Roadmap

We evaluate your application portfolio to identify modernization opportunities and define a clear transformation roadmap. - Cloud-Native Architecture Design

Our experts design scalable, resilient architectures using microservices, containers, and APIs. - Automation-Driven Transformation

We leverage AI and automation to accelerate code transformation, testing, and deployment. This reduces time-to-market. - Seamless Integration

We ensure smooth integration with existing systems, enabling hybrid and multi-cloud environments. - Continuous Optimization

Post-migration, we optimize performance, cost, and security to maximize ROI.

Our expertise across AWS, Google Cloud, and Salesforce enables us to deliver tailored solutions that meet your unique business needs.

Real Transformation: From Legacy to Digital Powerhouse

Imagine a financial services organization struggling with a monolithic legacy system. Deployment cycles take months, and scaling is nearly impossible.

Through cloud modernization:

• The application is decomposed into microservices

• APIs enable seamless integration with digital channels

• Automated pipelines accelerate deployment

• Cloud infrastructure ensures scalability and resilience

The result?

A future-ready digital platform that delivers faster services, improved customer experiences, and significant cost savings.

The Future is Cloud-Native

The rise of AI, data-driven decision-making, and digital ecosystems is reshaping enterprise IT. Organizations must move beyond legacy systems to remain competitive.

Industry trends show that cloud modernization is becoming essential and not optional for businesses aiming to scale and innovate in the AI-driven era. (TechRadar)

Those who modernize today will lead tomorrow.

Final Thoughts: Turn Your Legacy into a Competitive Advantage

Cloud modernization is not just a technology shift. It is a business transformation strategy. It empowers organizations to:

• Innovate faster

• Scale seamlessly

• Reduce costs

• Deliver superior customer experiences

At Prolifics, we help you transform legacy applications into scalable digital powerhouses by unlocking the full potential of the cloud.

Ready to Modernize?

Your legacy systems don’t have to hold you back.

Partner with Prolifics to build a cloud-first, future-ready enterprise where innovation, agility, and scalability drive success.

Let’s transform your digital future today.

FAQS

What is cloud modernization?

Cloud modernization is the process of transforming legacy applications, infrastructure, and data systems to leverage cloud-native technologies. It includes rehosting, refactoring, replatforming, or rebuilding applications to improve scalability, agility, and performance.

Why is cloud modernization important for businesses?

Cloud modernization enables organizations to reduce operational costs, improve system reliability, enhance security, and accelerate innovation. It helps businesses stay competitive by enabling faster deployments and better customer experiences.

What are the key approaches to cloud modernization?

Common strategies include:

Rehosting (Lift and Shift): Moving applications to the cloud with minimal changes

Replatforming: Making minor optimizations for cloud efficiency

Refactoring: Redesigning applications to be cloud-native

Rebuilding/Replacement: Developing new applications or adopting SaaS solutions

What challenges are associated with cloud modernization?

Organizations often face challenges such as legacy system complexity, data migration risks, security and compliance concerns, skill gaps, and potential downtime during transition.

How can organizations ensure a successful cloud modernization strategy?

Success depends on a clear roadmap, application assessment, choosing the right cloud model (public, private, hybrid), leveraging automation tools, and partnering with experienced cloud service providers to minimize risk and maximize ROI.