Artificial intelligence has evolved from passive assistants to agentic systems that think, decide, and act autonomously. These systems do not just generate responses. They execute workflows, trigger actions, and influence real-world outcomes.

But here is the uncomfortable truth. Without AI guardrails, agentic AI becomes a risk at scale.

From hallucinated outputs and biased decisions to data leaks and compliance violations, the risks of unchecked AI are real and growing. Enterprises rushing into AI adoption often overlook one critical layer: governance embedded into the AI lifecycle through a strong AI governance framework.

This is where AI guardrails come in not as restrictions, but as enablers of trust, scale, and responsible AI governance for enterprise-grade AI transformation.

What Are AI Guardrails?

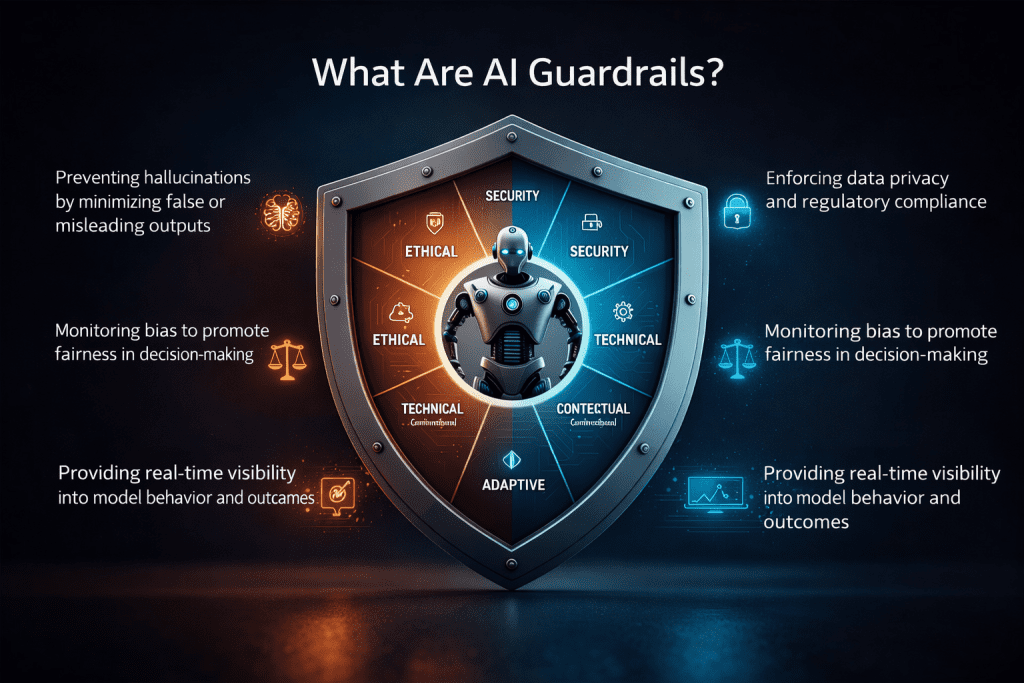

If you’re wondering what are AI guardrails in generative AI, they are mechanisms designed to ensure AI systems operate safely, ethically, and within defined boundaries.

Guardrails AI is an open-source platform designed to help developers and enterprises mitigate risks when deploying AI-driven solutions. It establishes a structured validation layer around both inputs and outputs, ensuring that AI models operate within defined boundaries and adhere to acceptable standards. They establish acceptable behaviors, define boundaries, and ensure AI systems operate within ethical, legal, and operational frameworks. In essence, AI guardrails enable the responsible and controlled use of AI.

They encompass a wide range of capabilities, including:

- Preventing hallucinations by minimizing false or misleading outputs and helping prevent AI hallucinations and bias in LLMs

- Enforcing AI compliance and data privacy and regulatory standards

- Monitoring bias to promote fairness in decision-making using ethical AI frameworks

- Providing real-time visibility into model behavior and outcomes

Importantly, AI guardrails are not limited to technical controls. They also include policies, processes, and human oversight that work together to guide AI systems from ideation through deployment and beyond.

The Critical Need for AI Guardrails in Development

AI has rapidly transformed software development. Tools such as GitHub Copilot, ChatGPT, and AWS CodeWhisperer are accelerating coding, automating testing, and streamlining bug resolution.

However, this increased speed introduces new risks, especially in the absence of strong governance and AI risk management practices.

One key challenge is over-reliance on AI-generated code without adequate validation. Research from Stanford and NYU indicates that developers who depend heavily on AI-generated solutions are more likely to introduce security vulnerabilities compared to those who do not.

This underscores the growing need for a robust AI governance framework that delivers real-time oversight, traceability, and accountability.

AI guardrails play a critical role in mitigating risks such as:

- Hallucinations, by ensuring outputs are accurate and verifiable

- Compliance violations, through continuous monitoring of regulations like GDPR and HIPAA

- Bias and discrimination, by auditing outcomes for fairness across diverse user groups

- Intellectual property risks, by detecting potential copyright or licensing issues

In enterprise environments, AI cannot function as a black box. Transparency, control, and trust are essential, and AI guardrails make this possible while strengthening LLM security and safety.

1. Input Guardrails: Securing the Front Door of Your AI

Every AI interaction starts with an input, and that is where risks begin. Agentic systems are vulnerable to prompt injection, malicious queries, and exposure of sensitive data. Without input validation, AI can be manipulated to produce harmful or unauthorised outputs.

Input guardrails act as the first line of defense and are a key part of AI guardrails for LLM security and compliance.

They ensure:

- Harmful or irrelevant queries are blocked

- Sensitive data requests are restricted

- Context remains aligned with business objectives

They define what your AI should never respond to, creating a strong foundation for safe interactions.

2. Output Guardrails: Ensuring Trustworthy Responses

LLMs are powerful, but they are not always accurate. They can hallucinate facts, produce biased content, or generate misleading information. Output guardrails act as a real-time quality control layer and are critical in how to prevent AI hallucinations and bias in LLMs.

They:

- Filter harmful or biased language

- Validate factual accuracy

- Enforce brand tone and compliance standards

For enterprises, this is not just about accuracy. It is about protecting reputation, customer trust, and regulatory alignment.

3. Data and Privacy Guardrails: Protecting What Matters Most

Data fuels AI, but it is also its biggest vulnerability. Without proper controls, LLMs can expose personally identifiable information, leak confidential data, or violate compliance frameworks.

Data guardrails ensure strict control over what AI can access, process, and share, strengthening AI compliance and data privacy.

They:

- Mask sensitive data

- Restrict access to secure datasets

- Prevent data leakage

These guardrails act as a policy enforcement layer, ensuring data usage aligns with governance standards and business policies.

4. Behavioral Guardrails: Controlling Autonomous Actions

Agentic AI can trigger workflows, call APIs, and execute multi-step decisions. Without behavioral guardrails, these systems may operate beyond their intended scope. This highlights why AI governance is important for agentic AI systems.

Behavioral guardrails define:

- What actions the AI can take

- When it can act

- Which systems or tools it can access

They ensure AI remains within defined roles and responsibilities, reducing operational risks and unintended consequences.

5. Monitoring and Audit Guardrails: Enabling Transparency and Accountability

AI systems must be observable, traceable, and auditable. Monitoring guardrails provide real-time visibility into AI behavior and support enterprise-level AI risk management.

They include:

- Logs of prompts, responses, and system actions

- Audit trails for compliance and governance

- Alerts for anomalies or unexpected behavior

This transforms AI from a black box into a transparent and controllable system, enabling enterprises to build trust and maintain accountability.

6. Human-in-the-Loop Guardrails: Keeping Humans in Control

No matter how advanced AI becomes, human oversight remains essential. Human-in-the-loop guardrails are a core component of responsible AI governance and modern ethical AI frameworks.

This is especially important in industries like healthcare, finance, and the public sector.

Human oversight ensures AI augments human intelligence rather than replacing it. It balances automation with accountability.

Why Guardrails Are the Backbone of Agentic AI

Agentic AI represents a shift from assistance to autonomous execution. This increases both opportunity and risk. Guardrails act as the control layer between innovation and enterprise risk.

They:

- Reduce hallucinations and misinformation

- Enforce compliance and ethical standards

- Protect sensitive data

- Align AI behavior with business goals

In simple terms, guardrails transform AI from a risky experiment into a scalable enterprise capability.

From Guardrails to Autonomous AI Governance

The future of AI is not just about smarter models. It is about smarter governance.

Leading organizations are adopting autonomous AI governance, where guardrails are embedded into development pipelines and enforced automatically.

This approach enables:

- Continuous compliance

- Real-time risk mitigation

- Scalable AI adoption

Guardrails become a combination of policies, processes, and technologies working together to manage AI responsibly across its lifecycle.

The Business Impact: Why This Matters Now

Organizations that ignore guardrails face serious consequences:

- Reputational damage

- Regulatory penalties

- Security vulnerabilities

- Loss of customer trust

Organizations that invest in AI governance gain:

- Faster and safer AI adoption

- Improved accuracy and decision-making

- Strong compliance posture

- Greater stakeholder confidence

How Prolifics Can Help You Build Responsible Agentic AI

At Prolifics, we help enterprises move from experimentation to enterprise-grade AI adoption securely and responsibly.

Our approach combines:

- AI governance frameworks

- Data security and compliance controls

- Intelligent automation and monitoring

- Scalable cloud and AI architectures

We ensure your AI systems are not only powerful, but also explainable, secure, compliant, and aligned with your business goals.

Because success in AI is not just about capability. It is about control, trust, and responsible execution.

Conclusion

Agentic AI is not just the future, it’s already reshaping how enterprises operate, decide, and innovate. But its true potential can only be realized when it is built on a foundation of trust.

Guardrails are not constraints, they are enablers. They empower organizations to scale AI responsibly, ensure compliance, and maintain control in an increasingly autonomous digital landscape.

Before deploying your next AI solution, ask yourself one critical question:

Do you have the right guardrails in place?

At Prolifics, we help organizations design and implement robust AI governance frameworks, combining strategy, technology, and security to ensure your AI initiatives are not only powerful, but also ethical, compliant, and future-ready.

Build AI with confidence. Build it with Prolifics.